Magnitudes of exploration.

Mostly standardize, exploration should drive order of magnitude improvement, and limit concurrent explorations.

Standardizing technology is a powerful way to create leverage: improve tooling a bit and every engineer will get more productive. Adopting superior technology is, in the long run, an even more powerful force, with successes compounding over time. The tradeoffs and timing between standardizing on what works, exploring for superior technology, and supporting adoption of superior technology are the core of engineering strategy.

An effective approach is to prioritize standardization, and explicitly pursue a bounded number of explorations that are pre-validated to offer a minimum of an order of magnitude improvement over the current standard.

Much thanks to my esteemed colleague Qi Jin who has spent quite a few hours discussing this topic with me recently.

Standardization

Standardization is focusing on a handful of technologies and adapting your approaches to fit within those technologies’ capabilities and constraints.

Fewer technologies support deeper investment in each, and reduce time spent on cross-technology integration. Narrow standards simplify the process of writing great documention, running effective training, providing excellent tooling, etc.

The benefits are even more obvious when operating technology at scale in production. Reliability is the byproduct of repeated, focused learning–often in the form of incidents and incident remediation–and running at significant scale requires major investment in tooling, training, preparation and practice.

Importantly, improvements to an already adopted technology are the only investments an organization can make that don’t create organizational risk. Investing into new technologies creates unknowns that the organization must invest into understanding; investing more into the existing standards is at worst neutral. (This ignores opportunity cost, more there in a moment.)

I am unaware of any successful technology company that doesn’t rely heavily on a relatively small set of standardized technologies. The combination of high leverage and low risk provided by standardized is fairly unique.

Exploration

Technology exploration is experimenting with a new approach or technology, with clear criteria for adoption beyond the experiment.

Applying its adoption criteria, an exploration either proceeds to adoption or halts with a trove of data:

proceeds - the new technology is proven out, leading to a migration from the previous approach to the new one.

halts - the new technology does not live up to its promise, and the experiment is fully deprecated.

This definition is deliberately quite constrained in order to focus the discussion on the value of effective exploration. It’s easy to prove the case that poorly run exploration leads to technical debt and organizational dysfunctional–just as easy as proving a similar case for poorly run standardization efforts.

The fundamental value of exploration is that your current tools have brought you close to some local maxima, but you’re very far from the global maxima. Even if you’re near the global maxima today, it’ll keep moving over time, and trending towards it requires ongoing investment.

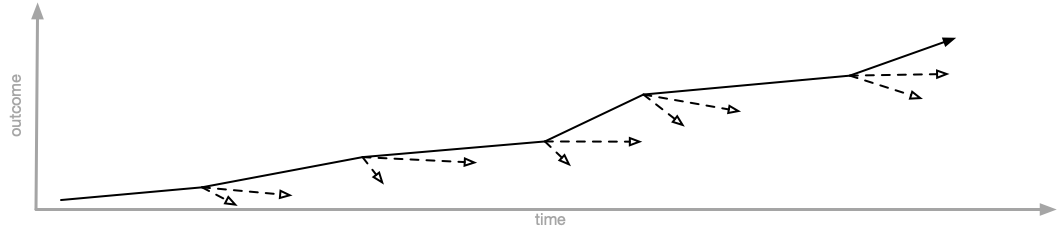

Each successful exploration slightly accelerates your overall productivity. The first doesn’t change things too much, and neither does the second, but as you continue to successfully complete explorations, their technical leverage begins to compound, slowly, subtlely becoming the most powerful creator of technical leverage.

Tension

While both standardization and exploration are quite powerful, they are often at odds between each other. You reap few benefits of standardization if you’re continually exploring, and rigid standardization freezes out the benefits of exploration.

On internal engineering practices at Amazon describes the results of over-standardization: once novel tooling struggles to keep up with the broader ecosystem’s reinvention and evolution, leading to poor developer experiences, weak tooling, and ample frustration. (A quick caveat that I’m certain the post doesn’t accurately reflect all of Amazons’ development experience, a company that large has many different lived experiences depending on team, role and perspective.)

If over-standardization is an evolutionary dead end, leaving you stranded on top of one local maxima with no means to progress towards the global maxima, a predominance of exploration doesn’t work particularly well either.

In the first five years of your software career, you’re effectively guaranteed to encounter an engineer or a team who refuse to use the standardized tooling and instead introduce a parallel stack. The initial results are wonderful, but finishing the work and then transitioning into operating that technology in production gets harder. This is often compounded by the folks introducing the technology getting frustrated by having to operate it, and looking to hand off the overhead they’ve introduced to another team. If that fails, they often leave the team or the company, implicitly offloading the overhead.

Balancing these two approaches is essential. Every successful technology company imposes varying degrees of control on introducing new technologies, and every successful technology company has mechanisms for continuing to introduce new ones.

An order of magnitude improvement

I’ve struggled for some time to articulate the right trade off here, but in a recent conversation with my coworker Qi Jin, he suggested a rule of thumb that resonates deeply.

Standardization is so powerful that we should default to consolidating on few platforms, and invest heavily into their success. However, we should pursue explorations that offers at least one order of magnitude improvement over the existing technology.

This improvement shouldn’t improve on a single dimension with meaningful regressions across other aspects, but instead it should approximately as strong on all dimensions and at least one dimension must show at least one order of magnitude improvement.

A few possible examples, although they’d all require significant evidence to prove the improvement:

- moving to a storage engine that is about as fast and as expressive, but is ten times cheaper to operate,

- changing from a batch compute model like Hadoop to use a streaming computation model like Flink, enabling the move from a daily cadence of data to a real-time cadence (assuming you can keep the operational complexity and costs relatively constant),

- moving from engineers developing on their laptops and spending days debugging issues to working on VMs which they can instantly reprovision from scratch when the encounter an environment problem.

The most valuable—and unsurprisingly the hardest—part is quantifying the improvement, and agreeing about that the baselines haven’t degraded. It’s quite challenging to compare the perfect vision of something non-existent with the reality of something flawed but real, which is why explorations are essential to pull both into reality for a measured evaluation.

Limit work-in-progress

Reflecting on my experience with technology change, I believe that introducing one additional constraint into the “order of magnitude improvement” rule makes it even more useful: maintain a fixed limit the number of ongoing explorations that can be occurring at any given time.

By constraining the explorations we simultaneously pursue to a small number—-perhaps two or three for a thousand person engineering organization—-we manage the risk of technical debt accretion, which protects our our ability to continue making explorations over time. Many companies don’t constrain exploration, and quickly find themselves in a position where they simply can’t risk making any more.

That’s a rough state to remain in.

Being able to continue making discoveries is essential, because the true power of exploration is not the first step, but the “nth plus one” step, compounding leverage all along the way.

There isn’t a single rule or approach that captures the full complexities of every situation, but a solid default approach can take you a surprisingly long way.

I’ve been in quite a few iterations of “standardize or explore” strategy discussions, and this is the best articulation I’ve found so far of what works in practice: mostly standardize, explorations should drive order of magnitude improvement, and limit concurrent explorations.