Poking around Contentful.

Slightly related to my notes on build versus buy decisions, I spent some time specifically getting a feel for Contentful over the weekend, and have written up some notes here.

Why Headless CMSes?

There are a lot of headless CMSes out there, the one I’ve personally used most is Airtable. You could argue Airtable isn’t just a headless CMS, and sure, that’s a fine argument to make. As a category, I think headless CMSes do a bunch of things particularly well:

- There are many of them, so it’s unlikely you’ll find your vendor shutting down without a “good enough” replacement to migrate to

- Content management workflows are dynamic living things that change frequently, but generally it’s the steps in the process, not the representation of the process, that is the core competency. Offloading this to a flexible tool that is extensible without engineering investment is a big enabler for the teams operating the workflow and allows engineers to spend more energy on highest leverage work.

- Because they’re headless, you own last mile delivery. This allows you to retain control of quality, latency and reliability. It also reduces switching costs, because you won’t require your users to perform a migration to a new URL, tool or whatnot. It further reduces switching costs because they require decoupling the last-mile from the workflow.

- That same decoupling also means you can perform a incremental migration towards or away from the CMS, allowing you to move simple functionality early when you start migrating, and also to move your most sophisticated workflows onto custom tooling if/when you get around to building them.

- The kind of content you store in a CMS is rarely sensitive. It’s usually marketing content or related to a content creation pipeline (say, articles for a magazine). This limits your exposure to risk from a security or privacy breach since you wouldn’t have user data there anyway.

Onboarding

Before going further, obligatory mention that I’ve already deleted the referenced API keys here!

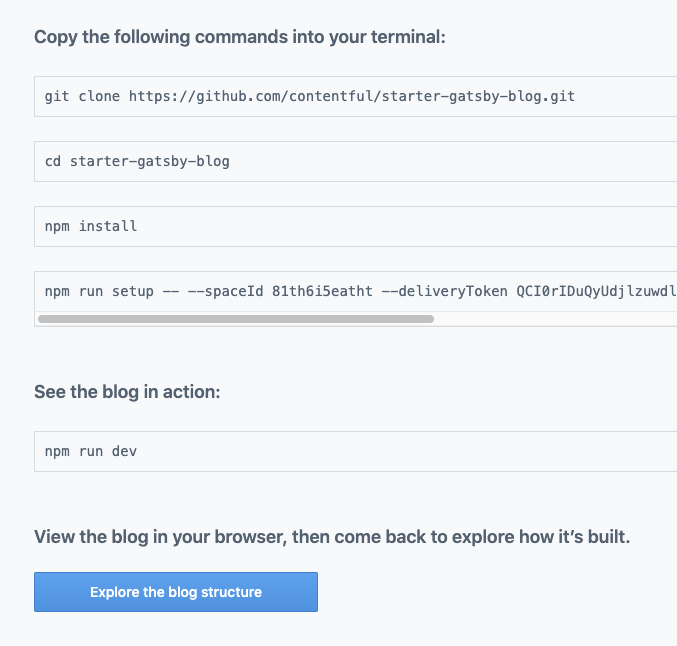

I got started with Contentful by creating a new account and going through their signup and onboarding workflow.

I was pleasantly surprised at how well integrated their onboarding was, promising four easy steps:

git clone https://github.com/contentful/starter-gatsby-blog.git

cd starter-gatsby-blog

npm install

npm run setup -- \

--spaceId etc \

--deliveryToken etc \

--managementToken etc

However, I must admit that I ran into a couple of issues along the way of following the tutorial. Most of these are specific to my local environment which is certainly my fault, but my experience was that the onboarding focused on the happy integration path to the exclusion of addressing problems that could arise along the way.

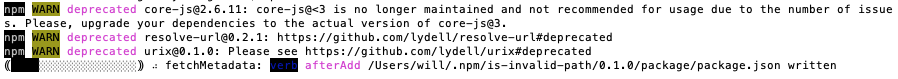

First, the deprecation warnings within their repository were a bit offsetting.

Because you’re generating a static site with Gatsby, I realize that the threat vectors here are fairly minimal, but it highlights to me the ongoing costs of maintaining these sorts of integrations well.

Second, my home computer had an old version of Nodejs running, and it failed with a fairly unhelpful error:

/Users/will/git/starter-gatsby-blog/node_modules/@hapi/joi/lib/types/object/index.js:255

!pattern.schema._validate(key, state, { ...options, abortEarly:true }).errors) {

SyntaxError: Unexpected token ...

Nothing about the error explicitly referenced it being out of date, but I just sort of assumed it was based on previous debugging experience and the fact that it was failing to lex modern JavaScript syntax, so I brew installed the latest version

brew upgrade nodejs

This sparked a bit of a side-quest to upgrade Node because my Homebrew install apparently broke in my last OS upgrade, but things worked out after some poking around.

After upgrading I finally ran npm run dev and got an error

about my spaceId not being set.

TypeError: Cannot read property 'spaceId' of undefined

I assumed this was due to running setup before the version ugprade, so

reran the npm run setup command from earlier, then I realized it was

a disaster all the way down and deleted node_modules/ and started over.

Then it failed again with a different error, and I deleted

package-lock.json, yarn.lock and node_modules/ and reinstalled from

scratch, and

that did do the trick, although I did hit a rate limiting error:

12:07:08 - Rate limit error occurred. Waiting for 1532 ms before retrying...

The import was successful.

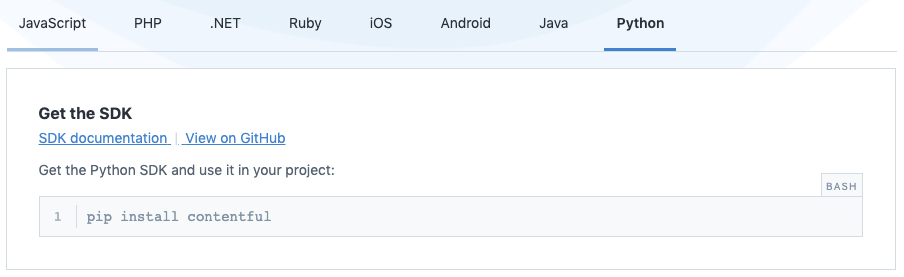

I’m not sure if that ratelimit did anything. seemingly it was successful so I’m assuming not. Their installation focused very heavily on SDKs, going so far as not to mention or reference their direct HTTP APIs.

I’m a strong believer that SDKs are the modern 3rd-party API interface that will replace HTTP/JSON and gRPC interfaces, but I think it’s still a bit messy to not include a link to the underlying interface. It’s often much easier to understand the API’s data model and integration flow from the API spec than the SDK, and it is the fallback point for unsupported languages (in this case, Go was the language I noticed missing for my interests).

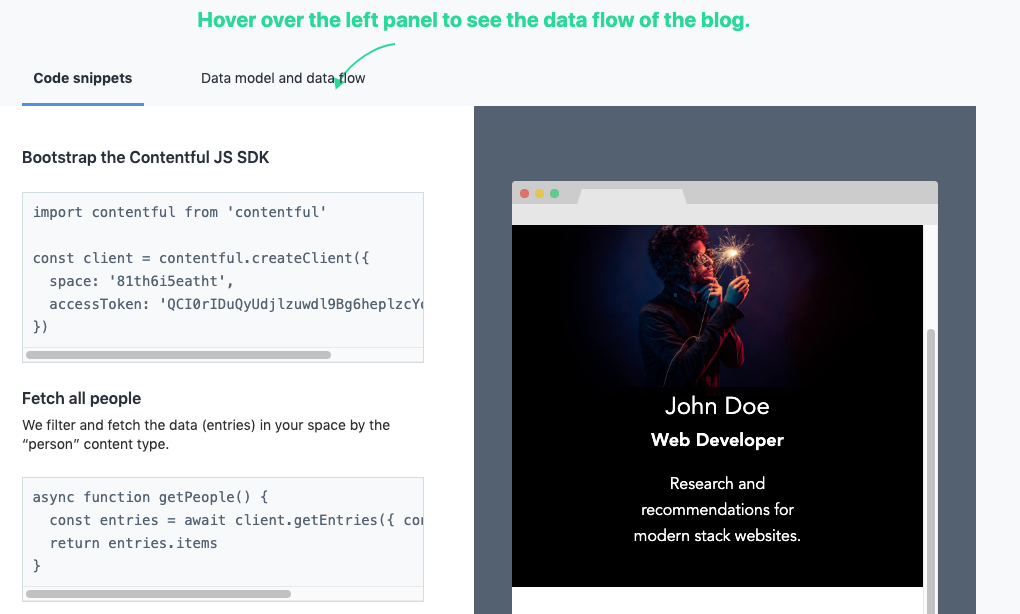

Anyway, API document griping aside, next came to their very helpful step-by-step explanation of how the Gatsby example integrates witih their SDK and API.

It’s all pretty straight forward, essentially exporting all the Contentful data using your space and access token.

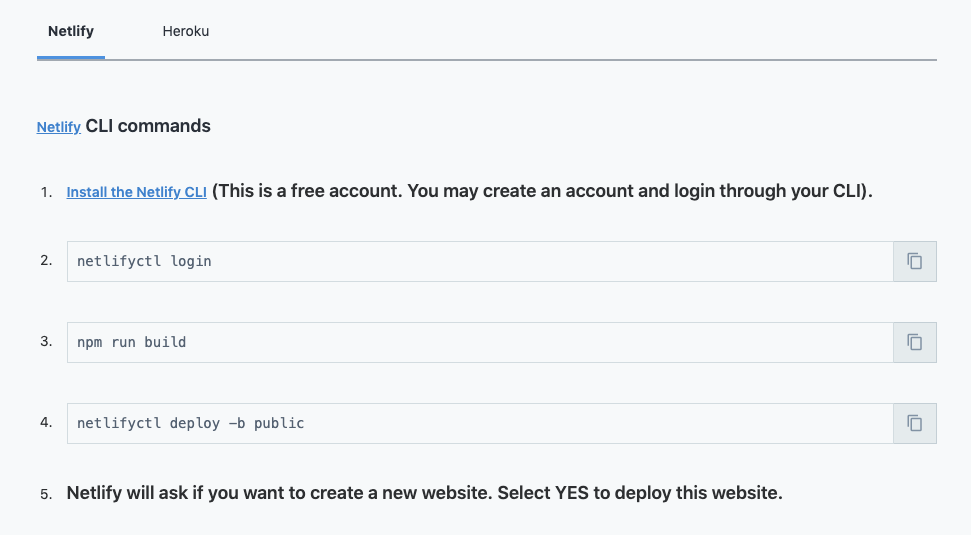

Next it brought us to a deployment page for the sample app, asking us to use either Netlify or Heroku.

Netlify resonated as a hip up and coming deployment target, and Heroku is a wonderful platform, but it felt a bit odd that none of AWS, Alicloud, Azure, or Google Cloud got instructions. Perhaps the assumption is that folks operating on those platform will be able to figure it out, but I think the same is true for folks on Netlify or Heroku, and most folks are on large cloud providers.

That said, figuring it out for AWS and GCP should be relatively simply, you build the Gatsby site via

./node_modules/.bin/gatsby build

Then move public/ onto Firebase Hosting

or Amazon S3.

Either way, at this point I was done with the initial tutorial.

Data models

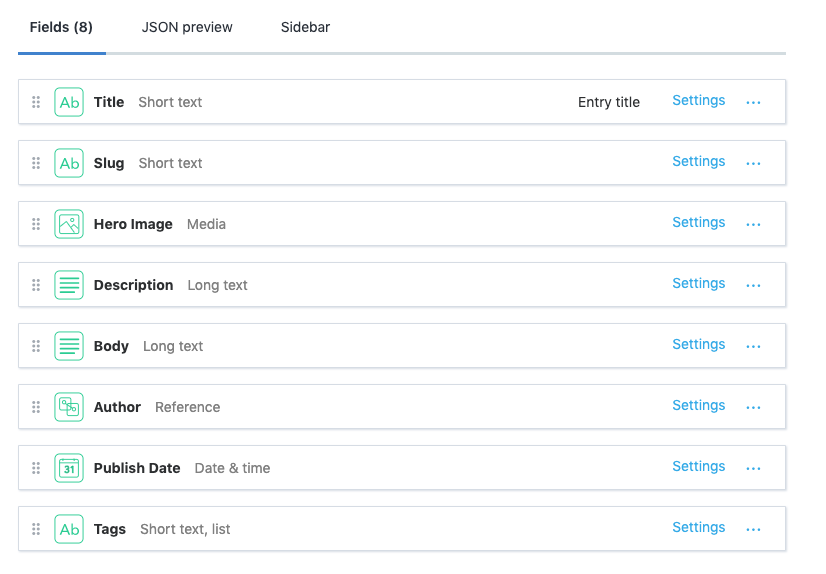

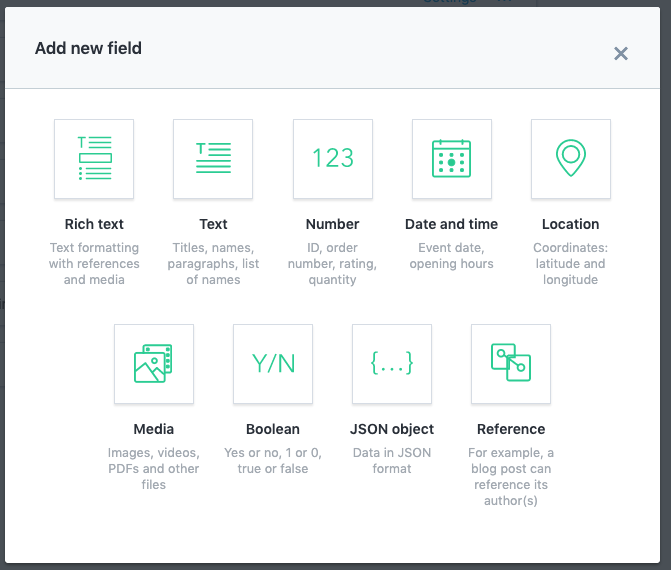

Contentful allows you to make different data models, which are essentially a database schema or spreadsheet headers for a certain type of content.

You can create a number of different types of fields, including the much maligned JSON object.

Altogether, this feels like a very reasonable set of fields and data modeling capabilities for a CMS. There are certainly other types you could imagine wanting, but the ones they support are sufficiently general that I think you could model whatever you need fairly easily.

Workflow

The final thing I wanted to do was spend some time getting familiar with the workflow to create and edit content.

As someone who has created three or four versions of my own CMS for lethain.com and now staffeng.com, I appreciate many of the little touches. For example, scheduling a publish date in the future is delightful, and the combination of future publishing and webhooks is a clever interface to decouple content creators and engineers while still getting the right functionality.

The editing itself seemed reasonable, especially if you setup the content preview integration.

However, I do find the available workflow to be a bit simpler than I’d expect from an enterprise grade CMS. A typical workflow I’d imagine is something getting drafted, then peer reviewed, then have an editor review, then deploy it out. You can do parts of this, for example only allowing editors the permissions to “publish” content, but it’s fairly constrained and more importantly doesn’t facilitate the easy handoffs across each step in your workflow.

I imagine you’d end up doing something along the lines of create a workflow content type, adding a reference to the workflow stages in your content, and integrate with webhooks to notify the appropriate folks based on the stage it entered, but that feels a bit awkward to ask each user to model and integrate for what I imagine is a fairly common feature request.

Compared to Airtable

As a long-term user of Airtable, it was interesting to play around with a more focused headless CMS solution. Airtable has a blog post of how to make it a headless CMS with Gatsby, but in many ways I think Airtable struggles to explain itself. Wikipedia struggles to describe it too, claiming it’s a spreadsheet-database hybrid, with features of a database but applied to a spreadsheet.

Altogether, I really enjoyed poking around with Contentful a bit, and can definitely see how it could be a powerful toolkit for folks to build on.